Last updated: May 2026

AI is being used inside many organizations, often without leadership visibility, formal approval, or clear governance. Employees are using AI to move faster, summarize information, and reduce friction in daily work. But many organizations still lack clear guardrails around what tools are being used, what data is being entered, and who is verifying or approving the output.

The issue is that AI is growing faster than organizations can make processes around it.

Without structure, visibility, and accountability, even the most effective uses of AI can create operational, compliance, and security risks leadership may not fully see yet.

What is shadow AI?

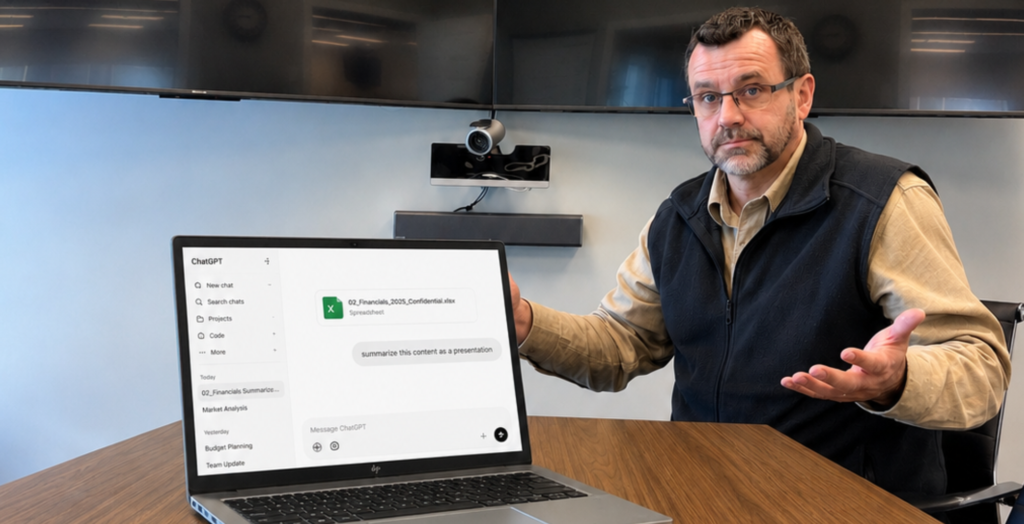

Shadow AI is the use of AI tools inside the workplace without formal approval, organizational visibility, or clear internal governance.

Employees are using AI to clean up emails, summarize meetings, organize spreadsheets, and move faster through operational work. They are trying to save time and get work done; not intentionally causing harm.

The leadership dilemma, and where the risk starts, is whether anyone knows where it’s happening, what information is being entered into these systems, or whether anyone is verifying the output.

AI is spreading faster than organizational oversight

AI is already inside many workplaces, often without leadership fully realizing how frequently it’s being used or how much information employees are already feeding into it.

Employees are using AI to summarize meetings, review spreadsheets, analyze contracts, write presentations, clean up emails, and several various tasks. But speed without structure can be destructive.

Many organizations now have employees making operational decisions with tools leadership has never formally governed. Some businesses still aren’t sure what AI platforms employees are using, what information is being entered into them, whether outputs are being verified, or where sensitive data may already be exposed.

This has escalated beyond IT. What we’re looking at now is a leadership responsibility.

Why are employees using AI without clear approval?

“Shadow AI involves the use of unauthorized artificial intelligence tools by employees seeking ways to do their jobs faster, better, or with less friction.” – (SHRM, 2025).

If there aren’t policies or guardrails surrounding AI usage in the first place, are your staff being defiant, or are they just unaware?

Using AI to summarize a meeting, organize information, or helping with various other tasks sounds harmless. But multiplied across an organization without any guardrails, they create a level of operational exposure and risk that many leadership teams are not set up for.

KPMG’s global AI trust study found that 66% of employees surveyed across 47 countries rely on AI output without evaluating its accuracy, and 46% admitted uploading sensitive company information into public AI tools.

The issue is that leadership may have no idea what was entered, what was trusted, or whether anyone checked the answer.

What are your next steps?

The solution starts with visibility.

Ask your team:

- What AI platforms and tools are being used?

- What information is being entered into these platforms?

- Who is reviewing and approving the output?

- Where could sensitive data already be exposed?

From there, organizations can begin creating practical guardrails around AI usage through approved platforms, acceptable-use policies, employee training, verification standards, and clear accountability.

Where we fit into this solution is by helping organizations approach AI strategically instead of reactively. Our role is to help businesses and organizations create the structure, governance, and visibility required to use AI safely, before risk turns into operational disruptions.

The bottom line

The leadership issue resides in communicating where and how to use AI correctly. Clear ownership, guardrails, and processes. Because leadership can’t govern what isn’t visible.

Frequently Asked Questions:

Is AI safe to use in the workplace?

AI can be safe and highly effective in the workplace when organizations establish clear governance, approved tools, employee training, ad safeguards around sensitive information. The risk increases when AI is used without visibility or oversight.

Should businesses ban employees from using AI?

Most organizations will likely benefit more from governing AI use rather than banning it entirely. Clear policies, approved tools, training, and visibility can help to create stronger long-term outcomes than attempting to eliminate AI usage all together.

How are employees already using AI at work?

Employees are commonly using AI to summarize meetings, organize spreadsheets, draft communication, analyze documents, research information, and automate repetitive tasks.

What is the biggest AI risk for businesses right now?

The biggest risk with using AI incorrectly and without clear processes is that organizations can risk data leaks, compliance violations, reputational damage, inaccurate reporting, internal/ operational mistakes, and making decisions based on inaccurate or incomplete information.